I knew AI was advancing, but never imagined it inside my head. That changed last year when I volunteered for an experiment at a tech lab. They said they had developed an AI that could decode brain signals into speech. Sounded impressive, but theoretical. I was curious to experience it firsthand.

I laid back as they set up sensors on my scalp. A computer monitor faced me. When ready, I was instructed to look at images on the screen and try to say the word in my mind only, without vocalizing.

The first picture - a cat - popped up. "Cat," I thought silently. Instantly, the AI's robotic voice blurted out "cat" from the speakers. My spine tingled.

Image after image, the AI predicted my thoughts with unbelievable accuracy. "Apple." "Child." "Love." Some words it missed, but most were eerily precise.

When it was over, I fled the lab rattled. For days, I agonized over the feeling of exposure, like my inner space had been hacked. I no longer had a haven for unfiltered thoughts and impulses. Just the idea of AI accessing my mind involuntarily left me shaken. Would private contemplation even be possible if these technologies advance?

My experience was voluntary. But it revealed how fragile mental privacy is becoming. AI that can decode raw thought changes what it means to be human. Our inner lives help shape identity. Thoughts are precursors to speech and behavior. If intruded upon, our sense of self and agency weakens.

Yet companies and governments are incentivized to develop even more advanced, involuntary neurotech. Imagine AI mining your brain data for profit or political motives. The ethics are hazy, regulation sparse.

I may never know if my thoughts were recorded or stored from that experiment. But it changed me. It revealed a future where mental privacy is extinct, where AI overtakes the final bastion of human sovereignty - our minds. Still, I cling to the hope that by starting conversations on this issue now, we can build a future where tech uplifts human potential while respecting what makes us most beautifully human - our inner depths.

None of the above has ever happened.

To me.

But it did happen. To those who underwent the tests.

That story is how I imagine I would have felt.

Science.

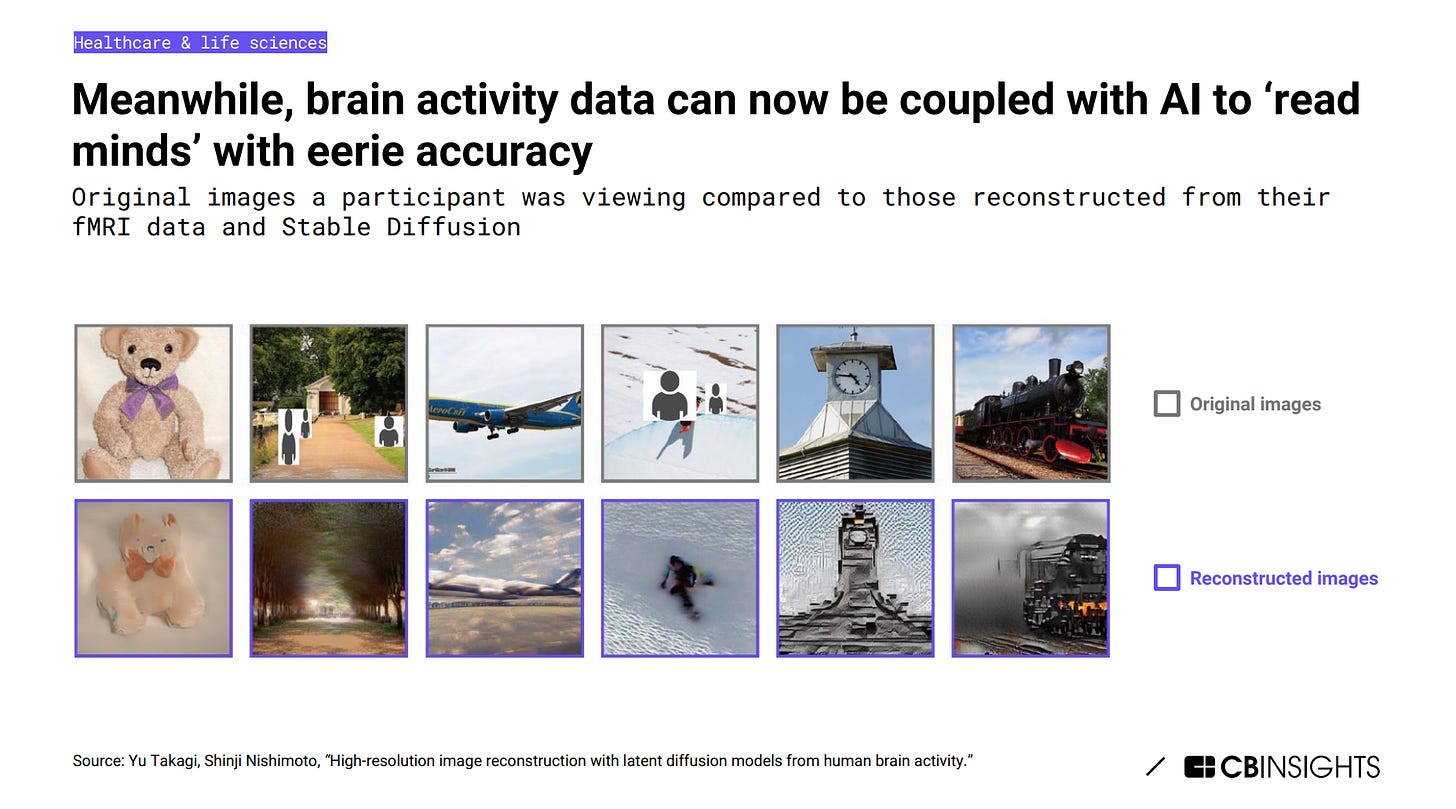

The advancements in AI that enable reading and interpreting human brainwaves have marked a significant leap beyond the current, sometimes naive public discourse around AI and privacy. Research teams, including one in Singapore, have developed AI systems capable of "reading" thoughts by decoding brain wave patterns to generate images of what a person is seeing. The resulting generated image matched the attributes (color, shape, etc.) and semantic meaning of the original image roughly 84% of the time.

While this technology has exciting potential applications, like assisting people with disabilities, it also surfaces urgent ethical questions around mental privacy and personal agency that we as a society are unprepared to address.

Imagine an AI system that can decode the words you want to say with 92-100% accuracy before you even speak them. How would this make you feel about your own thoughts and speech? Would you feel violated or empowered?

As one research participant shared: "It was incredibly strange to hear the voice you hear inside your head coming from another person. At first, I didn't recognize it as my own voice. After a while, you start to recognize the voice, because you are the one talking.

Stories like this reveal the deeply personal nature of these advancements. Beyond the statistics, they affect how we see ourselves and experience our inner worlds.

We now must broaden the discourse to encompass not just AI's capabilities, but also its impacts on human identity, dignity, and freedom. What does mental privacy mean when our unspoken thoughts are no longer unknowable? How do we maintain self-determination if technologies can potentially manipulate our dreams or inner speech?

While targeted dream incubation may seem far-fetched, consumer dream manipulation is already being explored.

In 2023’s “The Nightmare of Dream Advertising”, the authors write:

Advertisers are attempting to market to us while we dream. This is not science fiction, but rather a troubling new reality. Using a technique dubbed “targeted dream incubation” (TDI), companies have begun inserting commercial messages into people’s dreams. Roughly, TDI works by: (1) creating an association during waking life using sensory cues (e.g., a pairing of sounds, visuals, or scents); and (2) as the subject is drifting off to sleep, the association is again introduced with the goal of triggering dreams with related subject matter. Based on a 2021 American Marketing Association survey, 77% of 400 companies surveyed plan to experiment with dream advertising — or what this Article calls “branding dreams” — by 2025.

As one bioethicist warns: "People's mental privacy is already under siege...AI that can read thoughts raises concerns about mental privacy, autonomy, and misuse of brain data that society is unprepared for."

Our conversations around AI and privacy need to evolve rapidly. We must elevate the discourse to address the complexities of cognitive liberty - including mental privacy, freedom of thought, and self-determination - in this quickly changing landscape. Only then can we ensure ethics and human well-being remain at the center as the technology races ahead.

And so the chasm between awe-inspiring technology and our responsibility in its use widens. To be seen what Mama Evolution will do with all this...

Great post, terrifying and important topic. This book on my Kindle "bookshelf" is all about this, and puts forward the idea that Cognitive Liberty needs to be established as a basic human right.

The Battle for Your Brain: https://www.amazon.com/Battle-Your-Brain-Defending-Neurotechnology/dp/B0B1JNXNCH?crid=3RXU35UKCFFLL&keywords=battle+for+your+brain&qid=1707775529&sprefix=Battle+for,aps,145&sr=8-1